The automotive industry is experiencing a technological revolution, which is being fuelled by technology surrounding automation, artificial intelligence, and sensor technologies. The core of this change is a trio of perception systems that are crucial, and they are LiDAR, Radar, and Cameras. These devices are the eyes of modern cars, and they allow such features as Advanced Driver Assistance Systems (ADAS) and a road to full independence of the car.

At NextGenTechLabs, we investigate on a regular basis the way industries are overcoming these challenges through AI and edge computing development, as well as sensor innovation. These sensors collaborate to create the meaning of the world in real time, whether it is to allow a car to sense obstacles, safe distances, identify traffic signs, or maneuver through tricky surroundings.

In this blog, we’ll discuss the functionality of LiDAR, Radar, and Cameras, their abilities and weaknesses, and learn to appreciate why sensor fusion has become the future of intelligent cars.

Understanding the Role of Car Sensors

It is essential to comprehend the reason why sensors are inevitable in contemporary automobiles before delving into each technology.

Vision, hearing, and intuition are all human senses that are used by human drivers to make decisions on the road. Driving vehicles should imitate and hopefully exceed these functions with autonomous and semi-autonomous cars. These sensors gather crude information concerning the surroundings of the vehicle, which is then processed by onboard computers to make driving decisions.

Modern vehicles utilize the three major types of sensors:

- LiDAR (Light Detection and Ranging)

- Radar (Radio Detection and Ranging)

- Cameras (Vision-based systems)

All these sensors have various principles that look at the environment, hence they are complementary and not competitive.

What is LiDAR and How Does It Work?

LiDAR is regarded as one of the most sophisticated sensor technologies in the field of autonomous vehicles. It determines distances with laser pulses and forms a very fine, detailed three-dimensional representation of the environment.

Working Principle

LiDAR systems release fast pulses of laser radiation into the surrounding objects. These pulses are reflected when they strike an object, and the system measures the time that the light takes to go back. This is referred to as time-of-flight measurement.

Based on this information, LiDAR is used to establish an accurate 3D depiction of the environment, which is sometimes called a point cloud.

Key Features of LiDAR

- High-resolution 3D mapping

- Proper distance estimation.

- Very good object recognition and classification.

- Performs well in dark environments.

Advantages

- Vastly accurate spatial recognition.

- Good at mapping and localizing.

- Good in the dark performance.

Limitations

- Very costly in comparison with other sensors.

- In fog, rain, or snow, performance can deteriorate.

- Some (it is possible to wear out) mechanical parts.

What is Radar and How Does It Work?

Radar is a technology that has found application in weather systems and aircraft for decades and is currently an essential aspect in vehicles.

Working Principle

Radar systems are those that send out radio waves that are reflected by objects. Investigating the reflected signals, the system can know:

- Distance to an object

- Speed (using the Doppler effect)

- Direction of movement

Key Features of Radar

- Long-range detection

- Speed measurement ability.

- Durable during bad weather.

Advantages

- Rains, mists, and dusts: works well in them.

- Cheaper than LiDAR.

- Good at identifying moving objects.

Limitations

- Lower resolution than LiDAR

- Problems with making distinctions among objects that are close to each other.

- Lack of object classification ability.

What are Cameras and How Do They Work?

The most intuitive sensors are cameras, which replicate the human eye. They store visual information either as pictures or video feeds.

Working Principle

Image sensors are used by cameras to record light and convert it into digital images. Such images are then manipulated with computer vision algorithms and artificial intelligence models in order to detect objects, lanes, signs, pedestrians, and so forth.

Key Features of Cameras

- High-resolution visual data

- Recognition of colors and texture.

- Reading skills, signs, and signals.

Advantages

- Economical and easily accessible.

- Rich contextual information.

- Critical to the classification of objects.

Limitations

- Lighting conditions are dependent on performance.

- Difficulties with low light or glare.

- Poor depth perception in the absence of further processing.

LiDAR vs Radar vs Cameras: A Comparative Overview

The differences between these sensors can facilitate the realization of the necessity of all three of them in vehicles today.

1. Detection Capability

- LiDAR: Ideal for accurate 3D mapping and positioning of objects.

- Radar: Ideal for measuring the speed and the distance of moving objects.

- Cameras: These are the best to use when you need to recognize objects and use visual cues.

2. Environmental Performance

- LiDAR: Weather sensitive.

- Radar: Ideally suited to all weather.

- Cameras: Camera sensitive to light and visibility.

3. Cost

- LiDAR: Most expensive

- Radar: Moderately priced

- Cameras: Most affordable

4. Data Output

- LiDAR: 3D point cloud

- Radar: range and speed information.

- Cameras: Visual images

Why Sensor Fusion is the Future

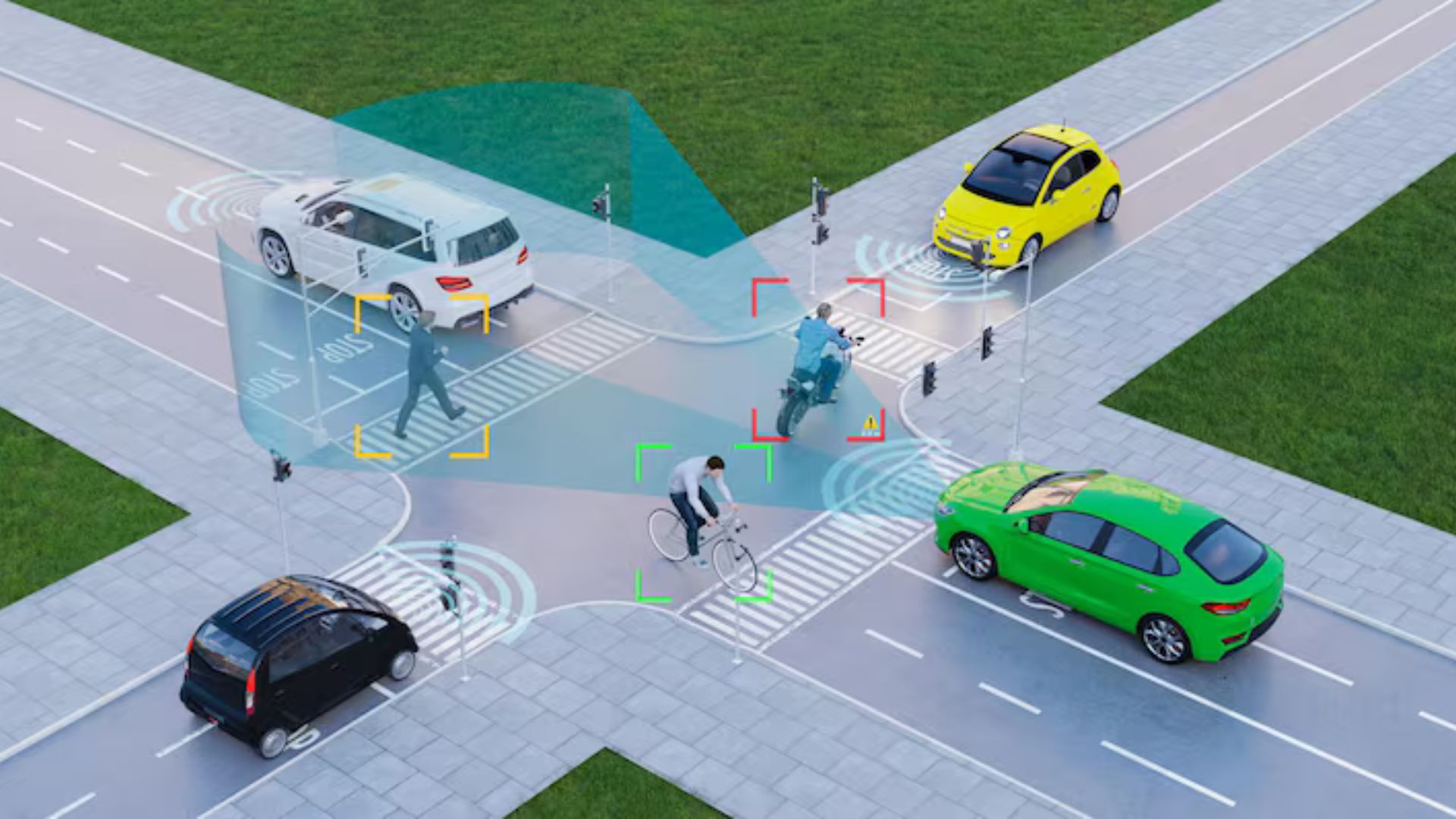

No single sensor is perfect. They both possess strong and weak features, which is why today’s cars are based on sensor fusion, the combination of information provided by various sensors, in order to form a complete picture of the surrounding space.

How Sensor Fusion Works

Sensor fusion means that the LiDAR, Radar, and Cameras are combined to:

- Improve accuracy

- Reduce uncertainty

- Enhance safety

- Enable redundancy

For example:

- A pedestrian is identified by cameras.

- LiDAR measures the precise distance.

- Radar is used to determine their speed of movement.

These inputs will create a complete picture to enable the vehicle to make better decisions.

Benefits of Sensor Fusion

- Increased reliability

- Better performance under difficult circumstances.

- Improved object classification and detection.

- Less risky autonomous driving systems.

Real-World Applications in Modern Vehicles

The sensor technologies are already implemented in some features of automobiles:

Advanced Driver Assistance Systems (ADAS).

- Radar-based Adaptive Cruise Control.

- Lane Keeping Assist (Camera-based)

- The next is called Automatic Emergency Braking (Sensor fusion).

Autonomous Vehicles

All three types of sensors are essential to the operation of self-driving cars so that they drive safely without a human driver inside the vehicle.

Parking Assistance

Sensors and cameras are used to enable the vehicles to park properly and to avoid hitting obstacles.

Challenges in Sensor Integration

Although sensor fusion can be very useful, there are challenges associated with the fusion:

- Multidimensional data processing needs.

- High computational costs

- Calibration and synchronization problems.

- Big data management.

In the case of innovators such as NextGenTechLabs, becoming adept with such technologies is the next step in propelling the new generation of mobility solutions.

The Future of Automotive Sensors

Car sensors have a bright future, and it is developing rapidly. Some key trends include:

- LiDAR in solid-state cutting expenses and enhancing life.

- Intelligent vision that extends the functionality of cameras.

- Sophisticated radar systems with better resolution.

- The 5G and V2X communication integration.

With the maturity of these technologies, it becomes possible to have increasingly autonomous, intelligent, and safe vehicles.

Conclusion

LiDAR, Radar, and Cameras are all important in the future of automotive technology. LiDAR can be used to map a CAD model with high precision in 3D, whereas Radar is good at identifying motion and working in harsh environments, while Cameras are able to offer visual context in depth.

Instead of competing technologies, they are complementary parts of the integrated system. Modern vehicles can present a degree of perception that is comparable (and in some cases superior) to that of humans because of sensor fusion.

At NextGenTechLabs, we aim at unpacking such complicated technologies and inform readers about the innovations that will propel the next generation of mobility. With the industry increasingly being automated, the combination of these sensors will determine the level of safety and efficiency with which vehicles sail in the world.

The future road is not merely one of smarter cars, but smarter sensing.