AI is developing more rapidly than ever, and Multimodal AI in 2026 is one of the most innovative developments as of today. In contrast to previous AI models, which could process only a single form of data (e.g., text or images), it is now possible to make AI models understand and integrate various types of data in parallel. This encompasses text, pictures, audio, and even video.

This change has rendered AI more intelligent, natural, and more like human intelligence. In the future, machines that are engines of advanced AI systems can read a paragraph, process a picture, view a video, and relate all of that data to get valuable responses. This is not technical in nature only- it is a shift in human-machine interaction.

At NextGenTechLabs, we closely follow such advancements to bring insights into how technologies like next-generation AI technologies are transforming real-world applications.

An example of the most prominent innovation is ChatGPT, which illustrates the way Multimodal AI in 2026 would work in practice. It is altering our day-in, day-out use of AI, whether it is screenshot analysis, describing something we see, or summarizing a video.

We are going to discuss in this detailed guide how Multimodal AI in 2026 functions, its essential technologies, its real-life use, its advantages, its drawbacks, and opportunities in the future.

What is Multimodal AI?

Multimodal AI in 2026 Multimodal AI (also called Multi-modal AI) is artificial intelligence software that is capable of processing and interpreting multiple categories of input data simultaneously. These are commonly inputs which comprise:

- Text

- Images

- Audio

- Video

Conventional AI systems could be only single-modal. As an illustration, NLP models used text, whereas computer vision systems dealt with images. But with Multimodal AI 2026, all these features are combined in a single model, and it makes it possible to understand more and make smarter decisions.

Key Features of Multimodal AI in 2026

- Cross-modal understanding

- Context-aware reasoning

- Real-time responses

- Enhanced user interaction

This will result in a more efficient and diverse Multimodal AI in 2026 than the earlier AI systems.

Evolution of AI Towards Multimodal Intelligence

The path to Multimodal AI in 2026 has been taken in a number of steps:

1. Single-Modality AI

First AI systems were specialized in a single form of data, e.g., text or images.

2. Specialized Models

Individually, progress was achieved in NLP, speech recognition, and computer vision.

3. Hybrid AI Systems

There were systems that started to incorporate two types of data, such as text and images.

4. Multimodal AI in 2026

The modern AI algorithms are able to incorporate all the important types of data in a single model and provide complex reasoning.

This development explains why Multimodal AI in 2026 is viewed as a radical direction in the field of AI development.

How AI Understands Text

This advanced AI technology is built on text understanding. The advanced Natural Language Processing (NLP) methods allow the AI systems to process human language.

Capabilities

- Context recognition

- Sentiment analysis

- Intent detection

- Language translation

- Conversational understanding

Multimodal AI in 2026 involves text with other types of data, and the AI is capable of more precise and valuable answers.

Example

If a user asks:

“Explain what is happening in this image.”

In 2026, the AI uses Multimodal AI to integrate the text query with the visual data and produce the answer related to it.

How AI Understands Images

By 2026, Multimodal AI will have developed much more advanced technology for understanding images. Big data is used to train AI models to identify objects, patterns, and relationships.

Capabilities

- Object detection

- Scene analysis

- Facial recognition

- Text extraction (OCR)

- Visual reasoning

In Multimodal AI of 2026, not only are images analyzed, but also text in the context to enhance accuracy and usability.

Example

Add a product photograph and request:

“Is this suitable for outdoor use?”

In 2026, the AI relies on Multimodal AI in order to read the image and the question.

How AI Understands Video

Video perception is among the most complicated capabilities of Multimodal AI in 2026. It entails the study of frame sequences and audio and contextual information.

Capabilities

- Frame-by-frame analysis

- Motion detection

- Audio transcription

- Scene understanding

- Event recognition

In 2026, AI will be able to summarize the video, draw important findings, and respond to the video content using Multimodal AI.

Example

- Upload a tutorial video.

- Ask for a summary

- Get complex insights with Multimodal AI in 2026.

How ChatGPT Uses Multimodal AI

In 2026, ChatGPT modern variants run on Multimodal AI and can process and bridge different types of data without any difficulties.

Features

- Image analysis

- Text + visual integration

- Context-aware responses

- Interactive conversations

As an illustration, a user can post a picture and write queries on the picture. In 2026, Multimodal AI is the one that ChatGPT uses to analyze both the image and the query, providing precise results.

Core Technologies Behind Multimodal AI in 2026

In 2026, Multimodal AI will be powerful due to a number of advanced technologies:

1. Transformer Architecture

Transformers allow AI models to work with various types of data.

2. Large Language Models (LLMs)

The LLaMs enable applications such as ChatGPT to produce human responses.

3. Vision-Language Models

These models find links between text and images and it forms the fabric of Multimodal AI in 2026.

4. Cross-modal Training

AI is conditioned on a variety of data sets and is more comprehensive in data sets.

Real-World Applications of Multimodal AI in 2026

The Multimodal AI will make itself felt throughout industries in 2026:

Healthcare

- Patient data analysis, Medical image analysis.

- AI-assisted diagnostics

Education

- Learning platforms that are interactive.

- AI tutors using Multimodal AI in 2026

Marketing

- Creation of content (images + video + text)

- Personalized campaigns

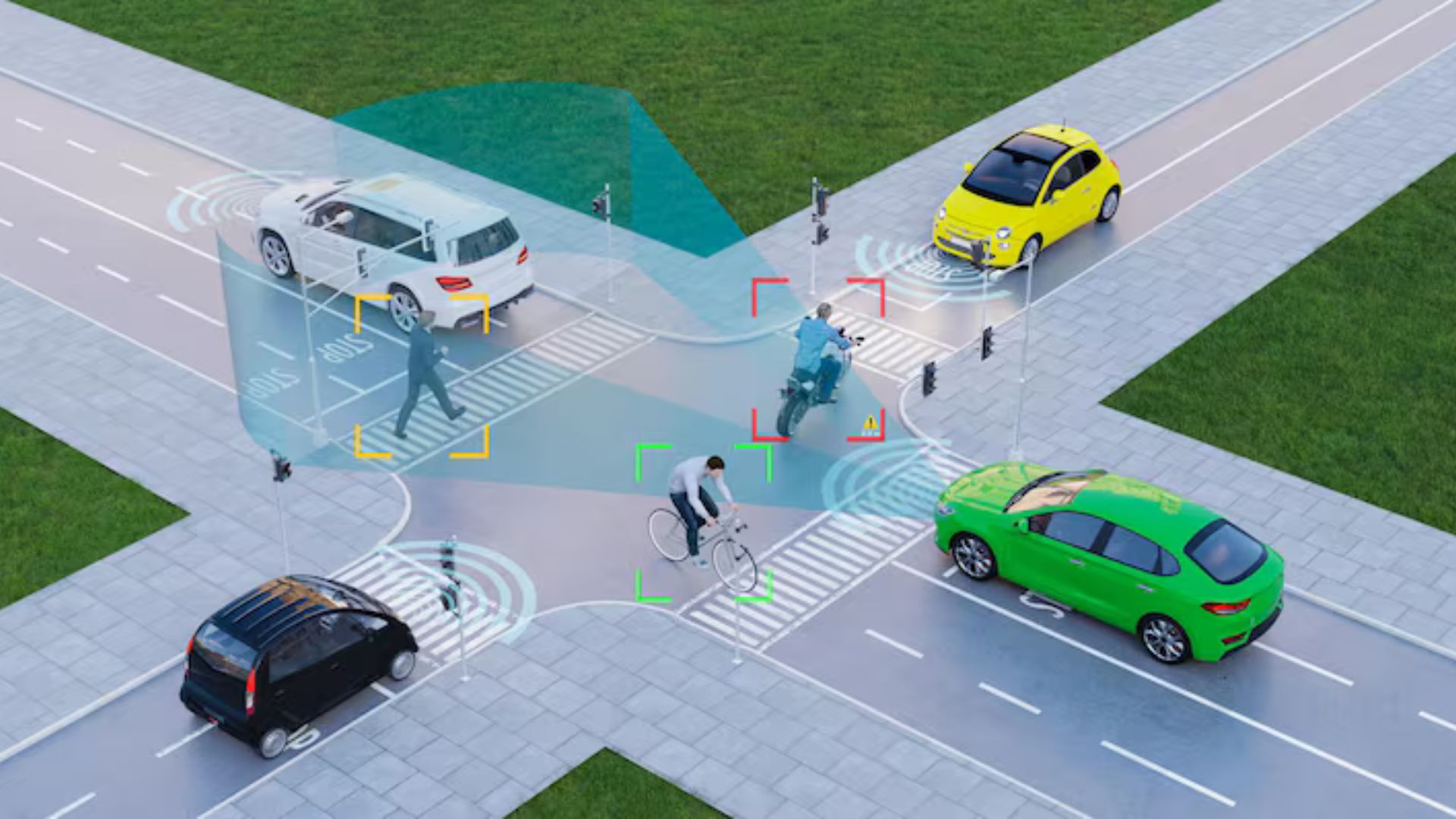

Autonomous Vehicles

- Environment intelligence in real-time.

- multimodal AI-driven decision-making in 2026.

Content Creation

- Automated video editing

- Script and image generation

Benefits of Multimodal AI in 2026

1. Better Accuracy

A combination of different types of data enhances decision-making.

2. Human-like Interaction

AI is made more instinctive and human.

3. Increased Productivity

Multimodal AI will be able to accomplish tasks faster in 2026.

4. Broader Applications

This AI approach can be significantly applied across multiple aspects of healthcare to entertainment.

Challenges of Multimodal AI in 2026

Multimodal AI in 2026 has a range of threats, although there are several benefits:

- Emphasis on high computational requirements.

- Data privacy concerns

- Bias in datasets

- Complexity in training

All these difficulties need to be surmounted to ensure the further development of Multimodal AI in 2026.

Future of Multimodal AI in 2026

The prospect of Multimodal AI in 2026 is very bright.

Trends to Watch

- Real-time AI assistants

- Emotion-aware systems

- High-level video perception.

- Market penetration into domestic equipment.

Multimodal AI of 2026 will be more potent and reachable due to the advancement of technologies.

Why Multimodal AI in 2026 Matters for Businesses

The adoption of Multimodal AI by businesses in 2026 is rapidly increasing in order to become competitive.

Key Advantages

- Better customer experience.

- Faster decision-making

- Enhanced automation

- Better data insights

In 2026, firms that use Multimodal AI to develop smarter products and services will be able to do so.

Multimodal AI in 2026 vs Traditional AI

| Feature | Traditional AI | Multimodal AI in 2026 |

| Data Type | Single | Multiple |

| Understanding | Limited | Context-aware |

| Interaction | Basic | Human-like |

| Use Cases | Narrow | Broad |

This comparison highlights the superiority of Multimodal AI in 2026.

Conclusion

In 2026, multimodal AI will become an important breakthrough in the field of artificial intelligence. Text, images, and video AI systems are smarter, more forthcoming, and better than ever.

AI Multimodal platforms, such as ChatGPT, are a clear indication of the great impact that Multimodal AI will have on businesses in the year 2026. Healthcare and the creation of content are only two areas where the influence is extensive and increasing.

Multimodal AI in 2026 will only develop further into the future, and it will determine the way human beings will relate to technology. This concept is critical to understanding how one can remain on top of the AI world.

At NextGenTechLabs, we are committed to bringing you the latest insights into AI and emerging technologies. Keep in touch with NextGenTechLabs to explore more innovations shaping the future.